Infrastructure MOC: Network Architecture & 3-2-2 Backup

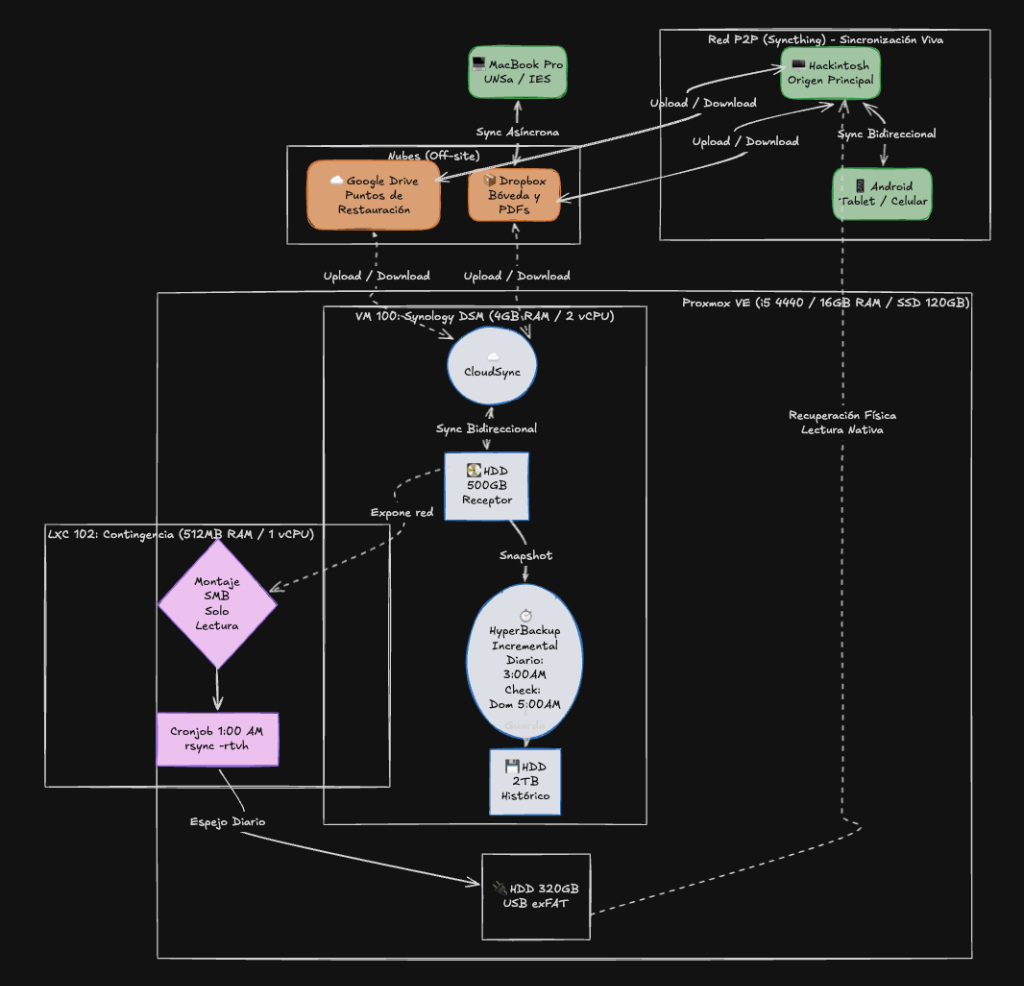

Infrastructure topology implementing a 3-2-2 backup strategy with Proxmox and Synology.

📌 General System Topology

🎯 Design Purpose

This infrastructure implements an advanced 3-2-2 strategy to ensure absolute availability, real-time synchronization, and catastrophic disaster tolerance. The system is logically divided into two major management tiers: an agile micro-backup at the application level (for notes) and a heavy macro-backup at the infrastructure level (for all data).

💻 Hardware Ecosystem & Resource Allocation To contextualize the topology, this architecture relies on the following physical and virtual nodes:

- Main Workstation (Main PC): Desktop Hackintosh. Acts as the primary origin and heavy lifter for vault organization.

- Academic Workstation: MacBook Pro 2015. Mobile node for university (IES/UNSa), asynchronously synced via Dropbox (Tier 1).

- Light Touch Environment: Android Ecosystem. Operates as an ultra-light client synced in real-time via Syncthing (Tier 3).

- Homelab Server (Proxmox VE): Physical node (Intel i5 4440 / 16GB RAM / SSD 120GB) acting as an hypervisor for the following services:

- VM 100 (Synology DSM): Virtualized NAS with allocated resources (4GB RAM / 2 vCPU). Directly manages a 500GB HDD (Receiver / CloudSync) and a 2TB HDD (Historical / HyperBackup).

- LXC 102 (Contingency): Ultra-light Debian 12 container (512MB RAM / 1 vCPU) responsible for isolating the execution of the rsync cronjob.

- Off-Grid Storage: 320GB external USB HDD (exFAT format). Designed for physical disconnection and immediate native reading on macOS/Windows in the event of a catastrophic server failure.

🛡️ Tier 1: Micro-Backup (Application Level / Obsidian)

This tier is exclusively responsible for safeguarding knowledge vaults.

- The Flow: Live vaults sync natively through Dropbox. However, we use an Obsidian plugin to generate hermetic security packages.

- Action Mechanism: The

Local Backupplugin compresses the entire vault into a.zipfile and exports it to Google Drive as a historical restore point. - Profile Management: To avoid duplicating processing load and wasting battery, different profiles are applied per device:

- Main Machine (Hackintosh): Performs the heavy lifting and the actual upload of the

.zipto Google Drive. - Secondary Machine (MacBook): Shares the core profile (plugins and shortcuts), but via a “mirrored” path saves the

.ziplocally without requiring the Google Drive client in the background. - Mobile (Android): Maintains a completely isolated profile (

.obsidian-mobile) to prioritize speed and touch performance.

- Main Machine (Hackintosh): Performs the heavy lifting and the actual upload of the

🔗 Linked Documentation:

🏗️ Tier 2: Macro-Backup (Infrastructure Level / Proxmox & NAS)

This is the main server engine (i5 4440 / 16GB RAM / SSD 120GB). Its goal is to agnostically protect the entire digital ecosystem, regardless of file type.

-

Operational Bridge (CloudSync): The NAS virtual machine (Synology DSM) acts as the central synchronization engine. It works bidirectionally with the clouds (Google Drive and Dropbox) on the primary 500GB drive. This provides total flexibility to modify files from any environment (local or cloud). The inherent risk of error propagation or accidental deletions from this approach is mitigated by the following two security layers.

-

Time Machine (HyperBackup): Every day at 3:00 AM, the NAS takes an incremental “snapshot” of the 500GB drive and saves it to the secondary 2TB drive. This protects against ransomware encryption or accidental deletions synchronized from the cloud.

-

Extreme Contingency (LXC Rsync): The strongest link in the chain. An LXC container in Proxmox, operating in isolation from the NAS, executes an automated script at 1:00 AM.

- Via a “Read-Only” connection, it reads NAS data and physically clones it to a 320GB USB drive formatted in exFAT.

- Zero RTO: In the event of a total server or motherboard failure, this drive is physically disconnected and natively read on the Hackintosh, allowing work to resume in seconds.

🔗 Linked Documentation:

🔄 Tier 3: Live P2P Sync (Mobile Environment)

To achieve instantaneous information flow and maintain an ultra-light touch environment without depending on traditional cloud synchronization times or weights, an exclusive Peer-to-Peer (P2P) tunnel is implemented for mobile devices.

- Tool: Syncthing.

- Goal: To directly and bidirectionally tie the base workstation (Hackintosh) with the Android ecosystem (Tablet/Phone).

- Laptop Exclusion (Tier 1 Integration): The academic MacBook is exempt from this P2P ring. Being a full desktop environment, it couples with Tier 1 using Dropbox (with selective vault synchronization). This takes advantage of asynchronous synchronization: content can be created offline in

00_inbox, synced with a brief internet connection (tethering), and the device powered off, leaving the main machine to absorb and organize the data later.

🔗 Linked Documentation:

Automated translation (technical mode).